Multimodal Affective and Social Interaction

Building machine learning models to detect contextual human communication while examining how users manage digital personas and seek information through interactive social platforms.

Outcome

JCSCW, CSCW 25, ICTD 22, CSCW 20, ICCBD 18, ICMI 18, HCC 19, TransAI 19 and TransAI 19, APSEC 18, and OzCHI 18

Overview

A core area of my earlier research addressed the technical challenge of building machine learning models that can recognize the nuance and actual intent behind complex human communication behaviors, such as sarcasm and satire. We also investigate how these communication styles influence public opinion and platform dynamics.

Approach

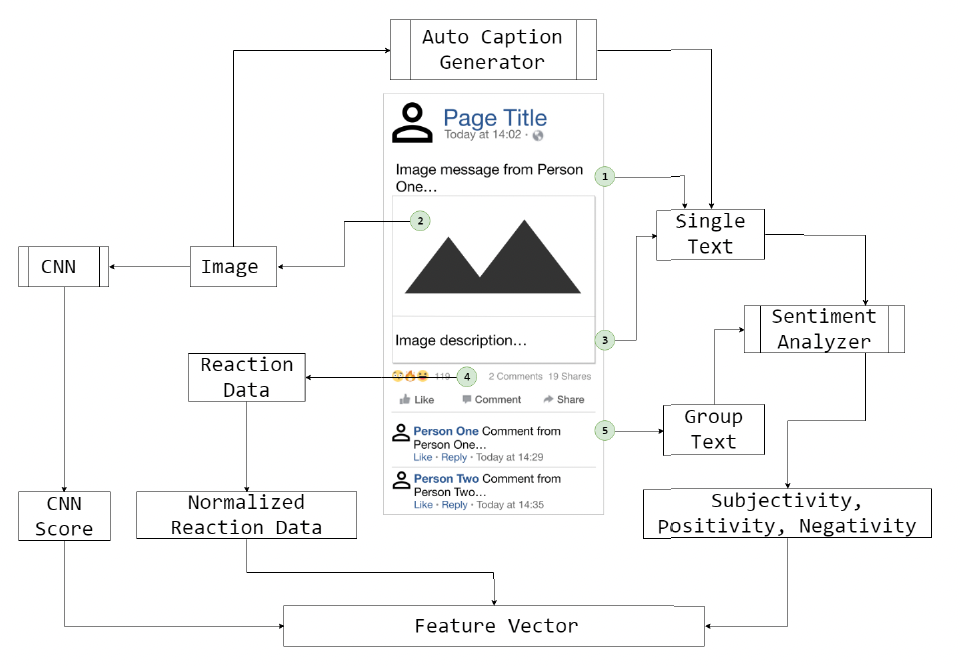

We employ supervised learning to develop multimodal models that go beyond simple text analysis. These models integrate visual cues from images, patterns of user interactions (like comments and reactions), and narrative trajectories through neural networks. We ground our computational work in psycholinguistic theory to define the structural characteristics of sarcasm and humor in social media settings. Additionally, we use interviews and trace data with social science and communication theories to study how sociopolitical discourse is managed by influencers on platforms like Facebook.

Key Contributions

We showed why sarcasm cannot be reliably detected from text alone, and often depends on visual and contextual cues. Our work offered early demonstration of multimodal sarcasm detection across text, images, and videos. A key outcome of our work was multimodal datasets and models for sarcasm detection in social media that is mindful of user interactions (e.g., reactions, comments) provide important signals for understanding sarcastic intent. It also connects sarcasm to broader questions of misinformation, interpretation, and meaning in digital content through the concepts of tone and storytelling style.